Iceberg won the table format war

Photo by Michail Dementiev on Unsplash

TL;DR: I believe Apache Iceberg won the table format wars, not because of a feature race, but primarily because of the open Iceberg spec. There are some features only available in Iceberg due to the breaking of compatibility with Hive, which was also a contributing factor to the adoption of the implementation.

Disclaimer: I am the Head of Developer Relations at Tabular and a Developer Advocate in both Apache Iceberg and Trino communities. All of my 🌶️ takes are my biased opinion and not necessarily the opinion of Tabular, the Apache Software Foundation, the Trino Software Foundation, or the communities I work with. This also goes into a bit of my personal story for leaving my previous company but relates to my reasoning so I offer you a TL;DR if you don’t care about the details.

My revelation with Iceberg

Two months ago, I made the difficult decision to leave Starburst which was hands down the best job I’ve ever had up to this point. Since I left I’ve had a lot of questions about my motivations for leaving and wanted to put some concerns to rest. This role allowed me to get deeply involved in open-source during working hours and showed me how I could aid the community to get traction on their work and drive the roadmap for many in the project. This was a new calling that overlapped with many altruist parts of how I define myself and was deeply rewarding.

I made some incredible friends, some of which have become invaluable mentors during this process of learning the nuances and interplay between venture capital and an open-source community. So why did I leave this job that I love so much?

Apache Iceberg Baby

Let’s time-travel (pun intended) to the first Iceberg episode of the Trino Community Broadcast. In true ADHD form, I crammed learning about Apache Iceberg well into the night before the broadcast with the creator of Iceberg, Ryan Blue. While setting up that demo, I really started to understand what a game-changer Iceberg was. I had heard the Trino users and maintainers talk about Iceberg replacing Hive but it just didn’t sink in for the first couple of months. I mean really, what could be better than Hive? 🥲

While researching I learned about hidden partitioning, schema evolution, and most importantly, the open specification. The whole package was just such an elegant solution to problems that had caused me and many in the Trino community failed deployments and late-night calls. Just as I had the epiphany with Trino (Presto at the time) of how big of a productivity booster SQL queries over multiple systems were, I had a similar experience with Iceberg that night. Preaching the combination of these two became somewhat of a mission of mine after that.

ANSI SQL + Iceberg + Parquet + S3

Immediately after that show, I wrote a four-blog series on Trino on Iceberg, did a talk, and built out the getting started repository for Iceberg. I was rather hooked on the thought of these two technologies in tandem. You start out with a system that can connect to any data source you throw at it and sees it as yet another SQL table. Take that system and add a table format that interacts with all the popular analytics engines people use today from Spark, Snowflake, and DuckDB, to Trino variants like EMR, Athena, and Starburst.

Standards all the way down

This approach to data virtualization is so interesting as each system offers full leverage over vendors trying to lock you in their particular query language or storage format. It pushes the incentives for vendors to support these open standards which puts them in a seemingly vulnerable position compared to locking users in. However, that’s a fallacy I hope vendors will slowly begin to understand is not true. With the open S3 storage standard, open file standards like Parquet, open table standards like Iceberg, and the ANSI SQL spec closely followed by Trino, the entire analytics warehouse has become a modular stack of truly open components. This is not just open in the sense of the freedom to use and contribute to a project, but the open standard that enables you the freedom to simply move between different projects.

This new freedom gives the user the features of a data warehouse, with the scalability of the cloud, and a free market of a la carte services to handle your needs at whatever price point you need at any given time. All users need to do in this new ecosystem is shop around and choose any open project or vendor that implements the open standard and your migration cost will be practically non-existent. This is the ultimate definition of future-proofing your architecture.

Back to why I left Starburst

Trino Community

I’ll quickly tie up why I left Starburst before I reveal why Iceberg won the table format wars. For the last three years, I have worked on building awareness around Trino. My partner in crime, Manfred Moser, had been in the Trino community and literally wrote the book on Trino. Together we spent long days and nights growing the Trino community. I loved every minute of it and honestly didn’t see myself leaving Starburst or shifting focus from Trino until it became an analytics organization standard.

Something became apparent though. Trino community health was thriving, and there were many organic product movements taking place in the Trino community. Cole Bowden was boosting the Trino release process getting us to cutting Trino releases every 1-2 weeks which is unprecedented in open-source. Cole, Manfred, and I did a manual scan over the pull requests and gracefully closed or revived outdated or abandoned pull requests. The Trino community is in great shape.

Iceberg Community

As I looked at Iceberg, the adoption and awareness were growing at an unprecedented rate with Snowflake, BigQuery, Starburst, and Athena all announcing support between 2021 and 2022. However, nothing was moving the needle forward from a developer experience perspective. There was some initial amazing work done by Sam Redai, but there was still so much to be done. I noticed the Iceberg documentation needed improvement. While many vendors were advocating for Iceberg, there was nobody putting in consistent work to the vanilla Iceberg site. PMCs like Ryan Blue, Jack Ye, Russel Spitzer, Dan Weeks, and many others are doing a great shared job of driving roadmap features for Iceberg, but no individual currently has the time to dedicate to the cat herding, improving communication in the project, or bettering the developer and contributor experience for users. Since Trino was on stable ground it felt imperative to move to Iceberg and fill in these gaps. When Ryan approached me with a Head of DevRel position at Tabular, I couldn’t pass up the opportunity. To be clear I left Starburst but not the Trino community. Being at Iceberg also helped me in my mission to continue forging these two technologies that I believe in so much.

Tell us why Iceberg won already!

Moving back to the meat of the subject. My first blog in the Trino community covered what I once called, the invisible Hive spec to alleviate confusion around why Trino would need a “Hive” connector if it’s a query engine itself. The reason we called the Hive connector as such is that it translated Parquet files sitting in an S3 object store into a schema that could be read and modified via a query engine that knew the Hive spec. This had nothing to do with Hive the query engine, but Hive the spec. Why was the Hive spec invisible 👻? Because nobody wrote it down. It was in the minds of the engineers who put Hive together, spread across Cloudera forums by engineers who had bashed their heads against the wall and reverse-engineered the binaries to understand this “spec”.

Why do you even need a spec?

Having an “invisible” spec was rather problematic, as every implementation of that spec ran on different assumptions. I spent days searching Hive solutions on Hortonworks/Cloudera forums trying to solve an error with solutions that for whatever reason, didn’t work on my instance of Hive. There were also implicit dependencies required with using the Hive spec. It’s actually incorrect to call the Hive spec as such because a spec should have independence of platform, programming language, and hardware, while including minimal implementation details not required for interoperability between implementations. SQL, for instance, is a declarative language that runs on systems written in, C++, Rust, Java, and doesn’t get into the business of telling query engines how to answer that query, just what behavior is expected.

The open spec is why Iceberg won

By the time there were various iterations of Hive from the Hadoop and Big Data Boom, there was not a very central source to lay a stake in the ground to standardize this spec. While you may imagine that we would have learned our lesson, until Iceberg, none of the projects officially formalized their assumptions and specifications for their project. Delta Lake and Hudi extended the Hive models to make migration from Hive simpler but kept a few of the issues that Hive introduced, like exposing the partitioning format to users running analysis.

Open specifications and vendor politics

You may seem skeptical that an open specification for a table format holds such weight, but it crept its way into a well-known feud between two large analytics vendors of the day, Databricks and Snowflake. Databricks has been the leading vendor in the data lakehouse market while Snowflake dominated the data warehouse market. Snowflake’s original strategy initially involved encouraging movement from the data lakehouse market back to the data warehouse market, while Databrick’s original strategy was to do the exact opposite. This conveyed the outdated trap that many vendors still fall prey to. This idea is that locking users in will help your business and stakeholders reduce churn and keep customers in the long run. Vendor lock-in was once a decades-long play, but as B2C consumerism expectations creep into B2B consumerism, we are seeing a gradual shift of practitioners demanding interoperability from vendors to give them the level of autonomy they have experienced with open-source projects.

This surfaced with Snowflake when they announced that they would be offering support for an Iceberg external table functionality in 2022. At first, I raised my eyebrow at this as I figured this was a feeble attempt for Snowflake to market itself as an open-friendly warehouse when the external table would be nothing but an easy way to migrate data from the data lake to Snowflake. Whatever their motives, this was good visibility for Iceberg and I was thrilled that Snowflake was showcasing the need for an open specification, gimmick or not. This even pressured other competing data warehouses like BigQuery to add Iceberg support.

The final signal that the open Iceberg spec won

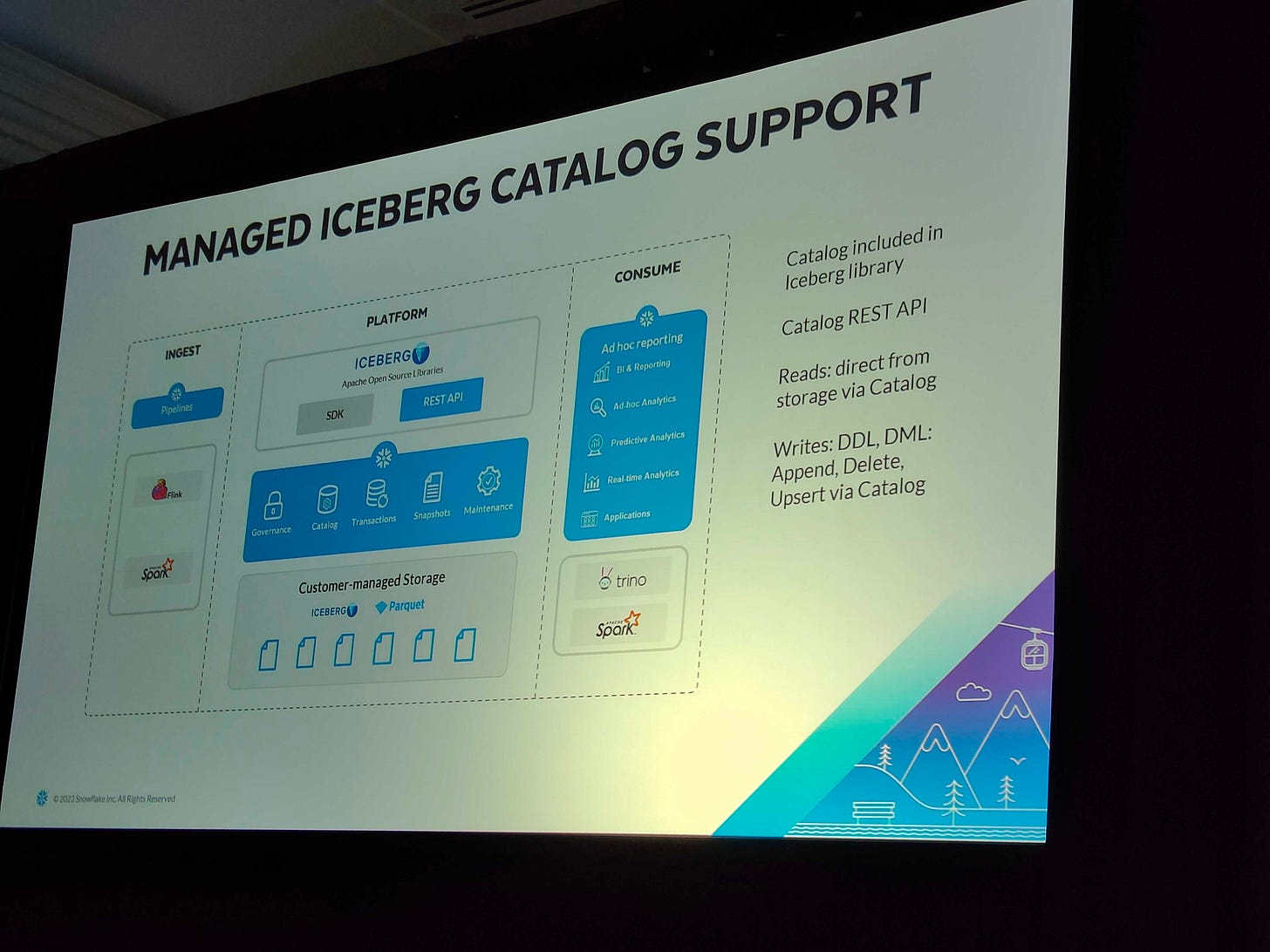

I didn’t, however, expect to see what took place at both Snowflake Summit and Data + AI Summit this year in 2023. If you don’t know these are Snowflake and Databricks’ big events that happened on the same week as an extension of their feud. There were already some hints that Snowflake had dropped to signal that they were ramping up their Iceberg support but were doing a great job at keeping it under wraps. The final reveal came at Snowflake Summit; Snowflake now offers managed Iceberg catalog support.

I was thrilled to see that finally, a data warehouse vendor understands there’s no way to beat open standards and they should adopt open storage as part of their core business model. What’s even more about this picture is they show both Trino and Spark engines as open compute alternatives to their own engine. This was beyond my expectations for Snowflake, and definitely showed me they were heading in the right direction for their customers.

Meanwhile, in a California town not far away, Databricks would also have a response to Snowflake’s announcement stored up. Delta Lake 3.0 was announced and it now supports compatibility across Delta Lake, Iceberg, and Hudi (be it limited compatibility for Iceberg for now). Whatever happens, there is now opportunity for Databricks customers to trial Iceberg, and this should excite everyone. We’re one step closer to having this spec become the common denominator. With both of these moves from a company that initially only wanted their proprietary format to win and a company that built on a competing format, I have the opinion that Iceberg has won the format wars.

Now I don’t want to act like these vendors simply have users’ best interests in mind. All they want is for you to make the decision to choose them. I work for a vendor, we also want your money. What I am seeing though is that now the industry is trending towards incentivizing openness as more customers demand it. In order for companies to stay ahead, they must embrace this fact rather than fight it. What is rather historical about this moment in time is that since the dawn of analytics and the data warehouse, there has been vendor lock-in on many fronts of the analytics market. This to me, signals the nail in the coffin of any capability for vendors to do this on the level playing field of open standards. It’s now up to the vendors to keep you happy and continuously stop you from churning. This in the long run is good for users and vendors and will ultimately drive better products.

One quick aside, some may mention that Hudi recently added a “spec” so why does Iceberg having a spec give it the winning vote? I recommend you go back to reading the purpose of an open spec section earlier in this blog, then look at both the Iceberg spec and the Hudi “spec” and determine which one satisfies the criteria. Hudi’s “spec” exposes Java dependencies making it unusable for any system not running on the JDK, doesn’t clarify schema, and has a lot of implementation details rather than leaving that to the systems that implement it. This “spec” is something closer to an architecture document than an open spec.

Will the Iceberg project drive Delta Lake and Hudi to nonexistence?

Maybe, or maybe not. That really depends on the feature set that these three table formats offer and what the actual value they bring to the users is. In other words, you decide what’s important, we decide what we’re going to support, and vendors and the larger data community decide who stays and who goes. This simply boils down to what users want, and if there is enough deviation for it to make sense for multiple formats to exist. Having competition in open-source is every bit as healthy as having competition in the vendor space – with some exceptions:

Exception 1: The projects don’t collectively build on an open specification. This enables vendor lock-in under the guise of being “open” simply because the source is available.

Exception 2: The projects are not just doubled efforts of each other. This is a sad waste of time and adds analysis paralysis to users. If two projects have clear value added in different domains with some overlap, then the choice becomes clearer based on the use case.

Exception 3: Any of the competing OSS projects primarily serve the needs of one or a few companies over the larger community. This exception is an anti-pattern of innovation brought up in the Innovator’s Dilemma. Focusing on the needs of the few will eventually force the majority to move to the next thing. Truly open projects will continue to evolve at the pace of the industry.

Just because I’m touting that Apache Iceberg has “won the table format wars” with an open spec, does not mean I am discrediting the hard work done by the competing table formats or advocating not to use them. Delta Lake has made sizeable efforts to stay competitive on a feature-by-feature basis. I’m also still good friends with Mr. DeltaLake himself, Denny Lee. I hold no grudge against these amazing people trying to do better for their users and customers. I am also excited at the fact that all formats now have some level of interoperability for users outside of the Iceberg ecosystem!

And…Hudi?

I toiled with my take on Hudi. I really enjoyed working with the folks from Hudi and while I usually follow the “don’t say anything unless it’s nice” rule, it would be disingenuous not to give my real opinion on this matter. I even quoted Reddit user u/JudgingYouThisSecond to initially avoid saying my own words, but even his take still doesn’t quite capture my thoughts concisely:

Ultimately, there is room enough for there to be several winners in the table format space:

- Hudi, for folks who care about or need near-real-time ingestion and streaming. This project will likely be #3 in the overall pecking order as it's become less relevant and seems to be losing steam realative to the other two in this list (at least IMO)

- Delta Lake, for people who play a lot in the Databricks ecosystem and don't mind that Delta Lake (while OSS) may end up being a bit of a “gateway drug” into a pay-to-play world

- Iceberg, for folks looking to avoid vendor lock-in and are looking for a table format with a growing community behind it.

While my opinion may change as these projects evolve, I consider myself an “Iceberg Guy” at the moment.

I feel like the open-source Hudi project is just not in a state where I would recommend it to a friend. I once thought perhaps Hudi’s upserts made their complexity worth it, but both Delta and Iceberg have improved on this front. My opinion from supporting all three formats in the Trino community is that Hudi is an overly complex implementation with many leaky abstractions such as exposing Hadoop and other legacy dependencies (e.g. the KyroSerializer) and doesn’t actually provide proper transaction semantics.

What I personally hope for is that we get to a point where we converge down to the minimum set of table format projects needed to scratch the different itches that shouldn’t be overlapping, and all adhering to the Iceberg specification. In essence, really only the Iceberg spec has won and Iceberg is currently the only implementation to support it. I would be thrilled to see Delta Lake and Hudi projects support the Iceberg spec and make this a fair race and make the new question, which Iceberg implementation won the most adoption? That game will be fun to play. Imagine Tabular, Databricks, Cloudera, and Onehouse all building on the same spec!

Note: Please don’t respond with silly performance benchmarks with TPC (or any standardized dataset in a vendor-controlled benchmark) to suggest the performance of these benchmarks is relevant to this conversation. It’s not.

Thanks for reading, and if you disagree and want to debate or love this and want to discuss it, reach out to me on LinkedIn, Twitter, or the Apache Iceberg Slack.

Stay classy Data Folks!

#iceberg #opensource #openstandard

bits